Sitting in computer labs and libraries, it now seems more common than not to see the average student flitting between tabs of writing and ChatGPT. It simply makes life far easier. Hours once spent devising essay plans, structuring presentations, and creating data plots can now be reduced to seconds.

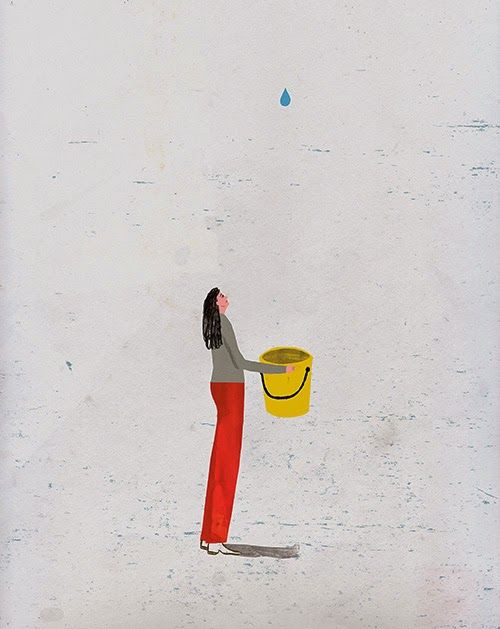

However, by the time the student closes their laptop and heads home, their study session may have consumed gallons of freshwater, with estimates suggesting that each query uses the equivalent of a small bottle. This claim has been steamrolling through social media and online articles for the past six months, and lately, it feels as though every other post I see is some variation of this particular stat. At first, I was dismissive.

As a computer science and AI undergraduate and a frequent LLM user, I may have been defensive of my field, so I dismissed the statistics as dogmatic, unreasoning attacks, likely from those in creative fields, where grievances lie not only with chatbots but also with AI-generated images and videos. Eventually, however, I had seen enough and decided to investigate.

Does the “one bottle a query” stat hold up?

The statistic originates from a 2023 paper by researchers at the University of California, Riverside, in which the authors estimate that a short ChatGPT conversation – roughly 20-50 questions and answers – would require around 500 ml of freshwater. This water is primarily needed for data centre cooling systems – massive warehouses that house servers that process and store data for AI systems like ChatGPT.

These data centres, owned and maintained by Microsoft on behalf of OpenAI, generate huge amounts of heat, and the most common method of dealing with this heat is by pumping cold water all around the facility, which absorbs the residual heat from the data centre facility, with the water evaporating into the atmosphere afterwards. For training just a single model – the process of feeding an AI system billions of data points for it to learn patterns to generate responses – the paper estimates that around 700,000 litres of freshwater were consumed in Microsoft’s U.S. data centres for GPT-3 alone, with significantly larger later models likely requiring far more.

These findings are certainly disconcerting and have reasonably garnered significant media traction; however, the research comes with many serious caveats. Firstly, the estimate relies on Microsoft’s publicly reported Water Effectiveness metrics, which are taken as an average across all of its facilities in the U.S., meaning that the “water-per-prompt” figure would shift substantially depending on which data system processed your query, the cooling system used, and even the time of day. A facility in California will consume far more water for each query than one in Alaska.

A recent study conducted by the Lawrence Berkeley National Lab reinforced this point, arguing that water-per-query statistics are essentially impossible to calculate in any existing context. Furthermore, tech companies do not distinguish between AI and non-AI data points in their reporting, meaning that water use attributed to your ChatGPT question might partly belong to someone else’s YouTube stream.

Although there is clearly more nuance to this statistic than the viral version might suggest, that does not mean the underlying concerns are misplaced. Data centres are expanding at an incredible rate, and understanding the environmental impact matters now, while the industry is still scaling and before the infrastructure becomes too entrenched to change.

So what do we really know?

Seeing as the evidence for the water-per-query stat is shaky, it is worth basing judgment on that which can be verified at an industry scale. However, this perspective is arguably even more alarming. In 2023, it was estimated that U.S. data centres consumed 64 billion litres of water directly through cooling, according to the Lawrence Berkeley National Lab, which warned that the number will quadruple by 2028. A single Google facility in Iowa consumed over 4.5 billion litres of potable (drinking) water in 2024 alone – enough to supply all of residential Iowa with water for 5 days. When OpenAI’s GPT-4 model was trained at Iowa data centres in the summer of 2022, over 50 million litres of water were consumed in a single month, equivalent to the monthly use of a mid-sized U.S. town. The per-query stat is an oversimplification. The scale of water behind, however, is not.

Not all water is equal

Once again, though initial figures on data centres’ water consumption are alarming, taking them at face value risks disregarding a complex, interactive landscape of geography, engineering, and cooling technology. Not all water, specifically that used by data centres, is equal, and understanding the relative importance of water in different contexts is crucial to the bigger picture and to moving towards both accountability and intelligent solutions to mitigate the industry’s environmental impact.

The most important variable is the cooling system. Most large data centres use evaporative cooling, where water absorbs heat and is vaporised – around 80% of it is lost entirely. But closed-loop systems, which recirculate coolant through sealed pipes, can reduce this loss by up to 70%, and direct-to-chip liquid cooling can cut consumption by as much as 91%.

Then there’s the question of what kind of water is being used. At Google’s data centre in Hamina, Finland, a seawater-cooling tunnel draws cold brackish water (a mixture of saltwater and freshwater) from the Baltic Sea and runs it through a closed-loop heat exchanger. No freshwater is consumed within this process. In Sines, Portugal, Start Campus (an AI data centre company) is building Europe’s largest data centre, cooled entirely by Atlantic seawater. At the same time, Amazon has made several promises to expand its use of recycled water and cool over 120 data centres with treated wastewater by 2030, though only time will tell whether it follows through. The gap between a facility drawing from stressed groundwater in Arizona and one recycling municipal wastewater in Virginia is enormous, yet both are counted in the same industry-wide statistics.

Sustainable data centres in Finland

So can data centres be genuinely sustainable? Finland is now offering a uniquely positive answer. In a small town north of Helsinki, a data centre has been piping its waste heat into the local district heating network for over a decade now, providing warmth to the equivalent of 2500 homes – around two-thirds of the town. On the south coast, Google’s data centre is doing the same, supplying the local town of Hamina with heating for 2000 homes, completely free of charge. In Espoo, the second-largest city in Finland, Finnish energy company Fortum, alongside Microsoft, is building the world’s largest data centre waste heat recovery project, expected to provide enough heating for approximately 100,000 homes and contribute to meeting 2-3% of Finland’s national emission reduction targets.

However, it would be dishonest of me to present Finland’s solution as a complete answer to the AI industry’s environmental costs. As many Finnish politicians and commentators have pointed out, data centres are always net energy consumers, despite engineers’ recent efforts to recycle their waste heat. It is estimated that by 2030, as much as 3% of global electricity consumption will be accounted for by the data industry, largely due to the meteoric rise in popularity of AI agents.

Furthermore, many Finns have concerns about the sovereignty of the infrastructure that is now local to them. Microsoft is an American company, and by partnering with them, the government of Finland and Espoo are choosing to hand over control of their city’s heating to infrastructure they do not own. When Google paused a multi-billion-euro data centre project in Muhos in late 2025, the community that was promised free heating was left without it.

Another article you may enjoy – https://thebadgeronline.com/2026/03/what-does-curing-pancreatic-cancer-in-mice-mean-for-humans/